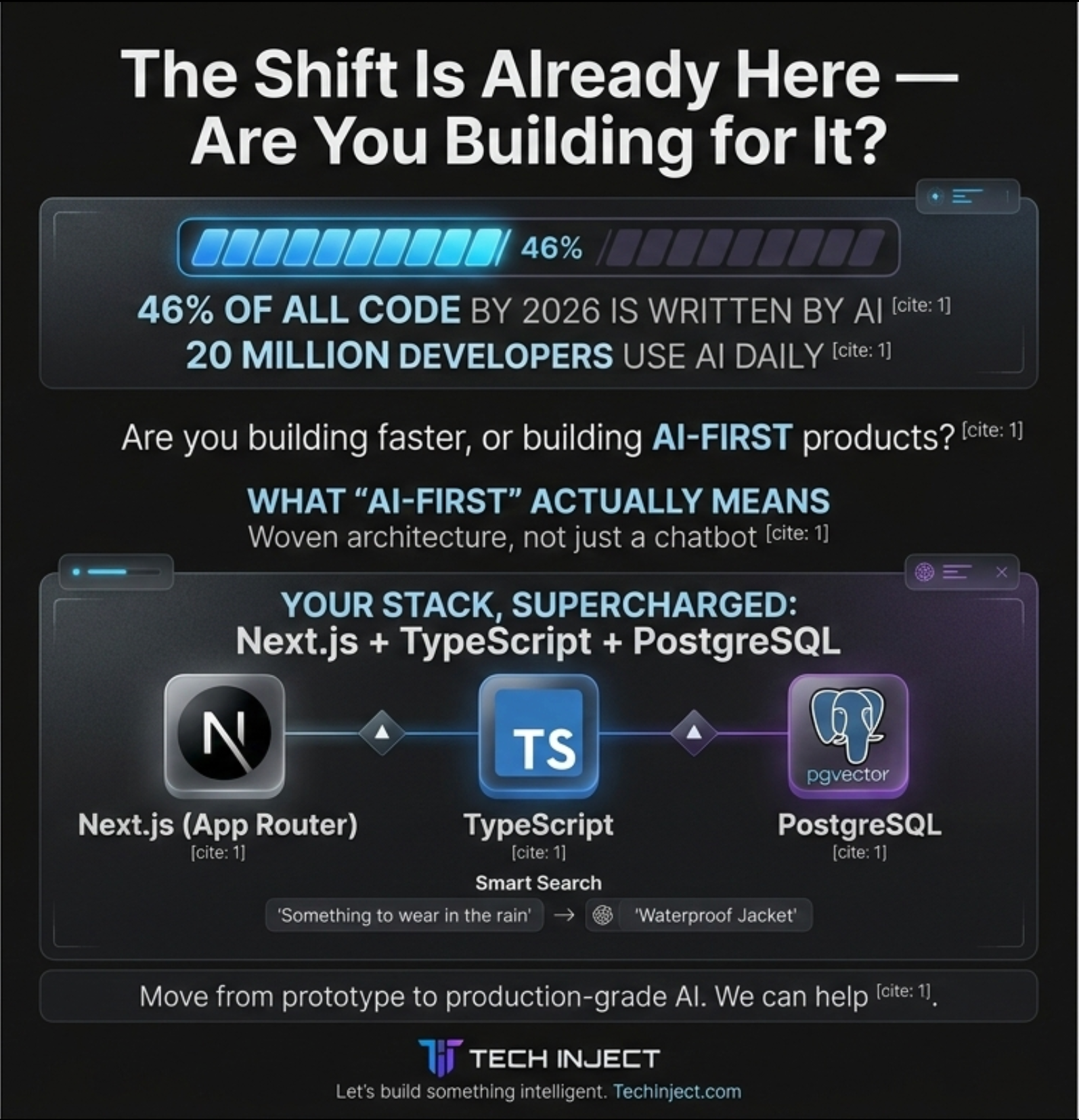

The Shift Is Already Here — Are You Building for It?

In 2026, 46% of all code written by active developers comes from AI. Over 20 million developers use AI coding assistants daily. This isn't a trend anymore — it's the baseline.

But here's the real question: are you just using AI to write code faster, or are you building products where AI IS the product?

There's a massive difference. And the developers and agencies who understand that difference are the ones winning contracts, shipping faster, and building things that actually matter.

What 'AI-First' Actually Means

AI-first doesn't mean slapping a chatbot on your homepage. It means designing your architecture so that AI is woven into the core user experience — from the moment someone lands on your site to the moment they convert.

Think:

- A search bar that understands intent, not just keywords

- A dashboard that surfaces insights before the user asks

- Content that adapts to who's reading it

- A backend that gets smarter with every interaction

This is what clients mean when they say 'I want AI in my product.' And this is exactly what the Next.js + TypeScript + PostgreSQL stack is built for.

Your Stack, Supercharged: Next.js + TypeScript + PostgreSQL

You don't need to switch stacks to go AI-first. Here's how each piece of your existing stack becomes an AI powerhouse:

Next.js (App Router) — Server Actions and Route Handlers make it trivial to call LLMs server-side. Stream responses directly to the UI using the Vercel AI SDK. No extra backend needed.

TypeScript — Type-safe AI. Define your prompt schemas, tool call signatures, and response shapes with full type safety. No more guessing what the model returns.

PostgreSQL + pgvector — Your database is now a vector store. Store embeddings alongside your regular data. Run semantic similarity searches with a single SQL query. No separate vector DB required.

This trio is arguably the most production-ready AI stack available today.

The 3 AI Features Every Modern Web App Should Have

1. Smart Search (Semantic Search with pgvector)

Forget LIKE queries. With pgvector and OpenAI embeddings, your search understands meaning. A user searching 'something to wear in the rain' finds 'waterproof jacket' — even if those words never appear together in your database.

How it works:

- Generate embeddings for your content using OpenAI's text-embedding-3-small model

- Store them as vector columns in PostgreSQL

- On search, embed the query and run a cosine similarity search

- Return ranked results by semantic relevance

2. AI Personalization

Use user behavior stored in Postgres to build a context window for your LLM. Feed it what the user has viewed, clicked, and purchased — and let it generate personalized recommendations, summaries, or next steps. No third-party personalization engine needed.

3. AI-Generated Content Pipelines

Build a Next.js Server Action that takes a topic, calls OpenAI, and returns structured content — blog drafts, product descriptions, email copy. Pair it with a Postgres table to store, version, and review outputs before publishing. This is a real AI pipeline, not a toy.

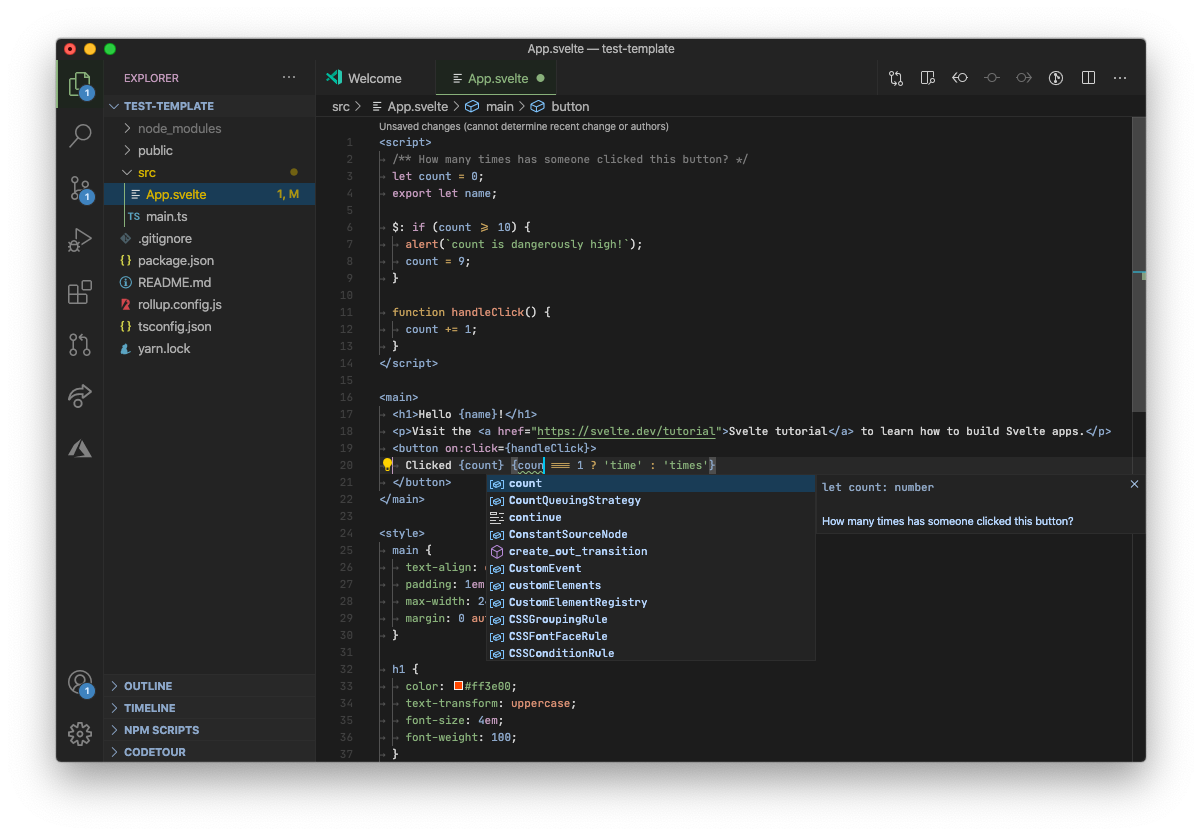

Vibe Coding Is Real — But Production Is a Different Game

Vibe coding — using tools like Cursor, v0, Bolt.new, or Lovable to build apps through conversation — has genuinely changed how fast you can go from idea to working prototype. In 2026, you can scaffold a full Next.js app with auth, database, and AI features in an afternoon.

But here's what vibe coding won't tell you:

- Prompt injection is a real security risk in AI-powered apps

- Streaming LLM responses need proper error boundaries and loading states

- Vector indexes need tuning for production query performance

- AI API costs can spiral without rate limiting and caching strategies

The gap between a vibe-coded prototype and a production-ready AI app is where real engineering happens. And that's exactly where experienced Next.js + TypeScript + Postgres developers earn their value.

How AI Has Changed the Developer's Daily Life

Let's be honest about what's actually changed:

- Code generation: GitHub Copilot and Cursor now handle boilerplate, repetitive patterns, and even full component generation. You spend more time reviewing than writing.

- Debugging: AI explains stack traces, suggests fixes, and catches edge cases you'd miss at 2am.

- Documentation: Auto-generated, always up to date. No more stale READMEs.

- Architecture decisions: Tools like Claude and GPT-4 are now legitimate sounding boards for system design.

The developer who uses AI well ships 2–3x faster. The developer who ignores it is already behind.

What You Should Be Doing Right Now

If you're a developer or a business looking to add AI to your product, here's the priority order:

- Add pgvector to your Postgres database — it's a one-line extension install and unlocks semantic search immediately

- Integrate the Vercel AI SDK — the fastest path to streaming LLM responses in Next.js

- Build one AI feature end-to-end — smart search, a content generator, or a recommendation engine. Ship it. Learn from it.

- Audit your prompts for security — treat prompt inputs like user inputs. Sanitize, validate, rate-limit.

- Measure AI API costs from day one — log every token, set budget alerts, cache aggressively

Looking to Add AI to Your Product?

Whether you're a startup wanting to ship an AI-powered MVP, an enterprise looking to add intelligent features to an existing platform, or a team that wants to move from vibe-coded prototype to production-grade AI app — the stack is ready. The patterns are proven. The only question is execution.

At Tech Inject, we build AI-first web applications using Next.js, TypeScript, and PostgreSQL. From semantic search and personalization engines to full AI pipelines and agentic workflows — we've done it, and we can do it for you.

Let's build something intelligent.